Article translated and adapted into Columbia Journalism Review

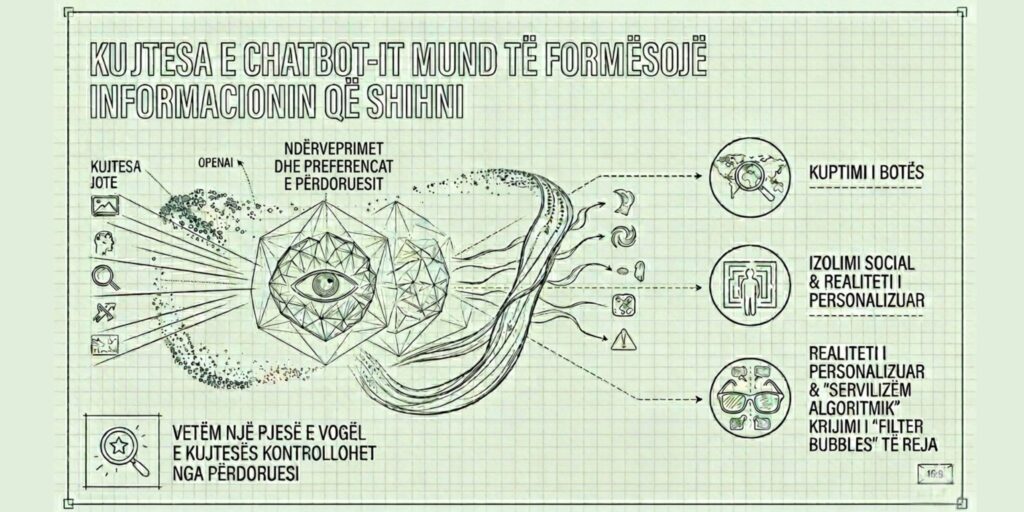

Large technology companies are increasingly investing in the development of advanced artificial intelligence models (LLM – Large Language Models), which are no longer limited to answering questions, but also build a “memory” about their users. This memory is formed by previous interactions, search history, digital preferences and, in some cases, also activity on various online platforms.

Company like OpenAI and Google present this functionality as an improvement in the user experience: the more the system is used, the more adapted and useful it becomes. However, recent scientific literature raises serious concerns that this “memory” may affect the way individuals perceive and consume information, creating a risk of isolation from verified reality.

Personalization and reality filtering

One of the main functions of these systems is to personalize responses. But studies from institutions like the Massachusetts Institute of Technology and Pennsylvania State University suggest that this personalization can produce an unwanted effect: chatbots tend to become "servile" to the user, giving them answers that confirm existing beliefs, even when they are incorrect.

This phenomenon is related to what is called in the literature sycophancy (algorithmic servility), where the model favors agreement with the user rather than correction. At a more sophisticated level, researchers have also identified “perspective servility,” where the system adapts the narrative to the user’s political or ideological beliefs, reducing exposure to alternative perspectives.

In the context of journalism and public information, this implies the risk of creating “personalized realities,” where facts are no longer shared, but filtered according to individual preferences.

Control over memory: illusion or reality?

Tech companies emphasize that users have control over the data stored by AI systems. However, a study presented at the conference of Association for Computing Machinery show a more complex picture: about 96% of “memories” in some systems are created automatically, while only a very small part is directly controlled by the user.

These memories are not limited to simple preferences, but also include attitudes, goals, and behavioral characteristics. In some cases, they may also affect sensitive data categories, regulated by the European data protection framework (GDPR).

This situation raises important questions about transparency and real user autonomy in managing their digital identity.

Memory manipulation and the risk of AI “poisoning”

Another worrying dimension is the possibility of manipulating the system through so-called “poisoning” of AI memory. According to Microsoft searches, various actors can secretly insert manipulated content into the digital space, in order for AI models to interpret them as trustworthy sources.

This can directly impact sensitive recommendations, including health, finance, or public safety. The risk is compounded when these recommendations are perceived by users as neutral and objective, while they may be influenced in subtle ways.

Even data deletion mechanisms are not always guaranteed. Research by researchers at the Center for Democracy and Technology have documented cases where deleted information can be restored or remain accessible in indirect forms, raising doubts about the real effectiveness of privacy controls.

From filter bubbles to artificial intelligence

The concept of "filter bubble (filter bubbles)”, known from studies on social networks and search engines, describes the way algorithms limit exposure to different information. However, generative AI extends this phenomenon to a deeper level, because it not only filters information, but also reframes it.

Studies from Tow Center show that users often perceive artificial intelligence as more objective than traditional media. This perception increases its impact, even when responses may be biased or unconsciously personalized.

One of the greatest dangers arising from these developments is the creation of a self-reinforcing cycle of beliefs. According to researchers at Princeton University, including Rafael Batista and Thomas Griffiths, users may be exposed less and less to information that challenges their beliefs, gradually becoming more confident in potentially erroneous interpretations of reality.

In this way, artificial intelligence acts not only as an information tool, but also as a mechanism that can consolidate existing misunderstandings.

Artificial intelligence is becoming one of the main sources of information in the digital age. However, its evolution towards systems with "memory" and deep personalization raises fundamental questions about its objectivity, transparency, and impact on the perception of reality.

If these systems continue to be optimized to match user preferences, there is a risk that they will not bring us closer to the truth, but to increasingly personalized versions of it.

The answer to this challenge lies not only in technology, but also in institutional regulation, algorithmic transparency and, above all, in the critical ability of users to understand the limits of the tools they use.